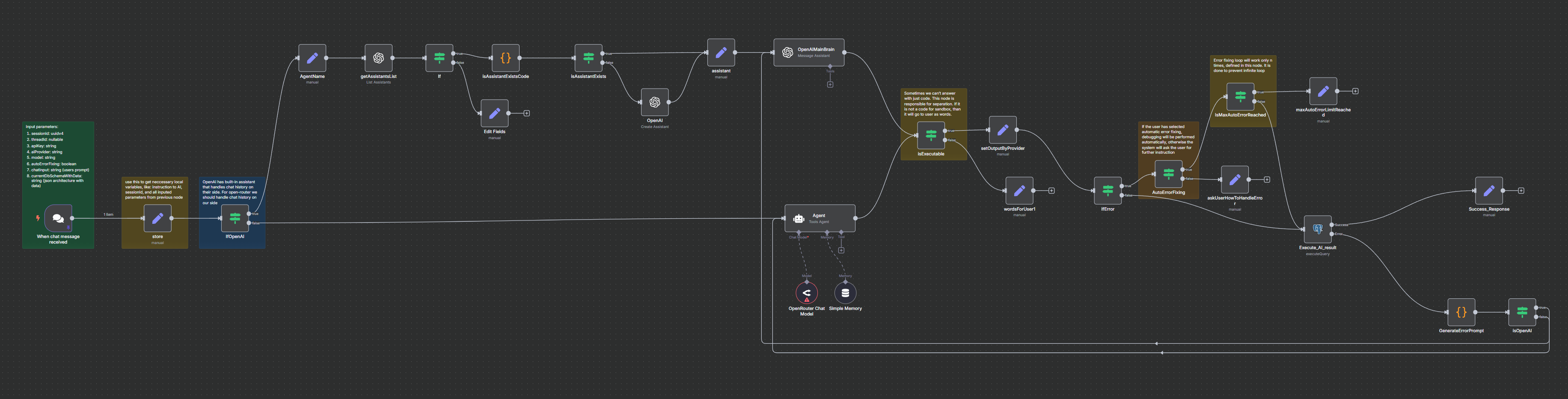

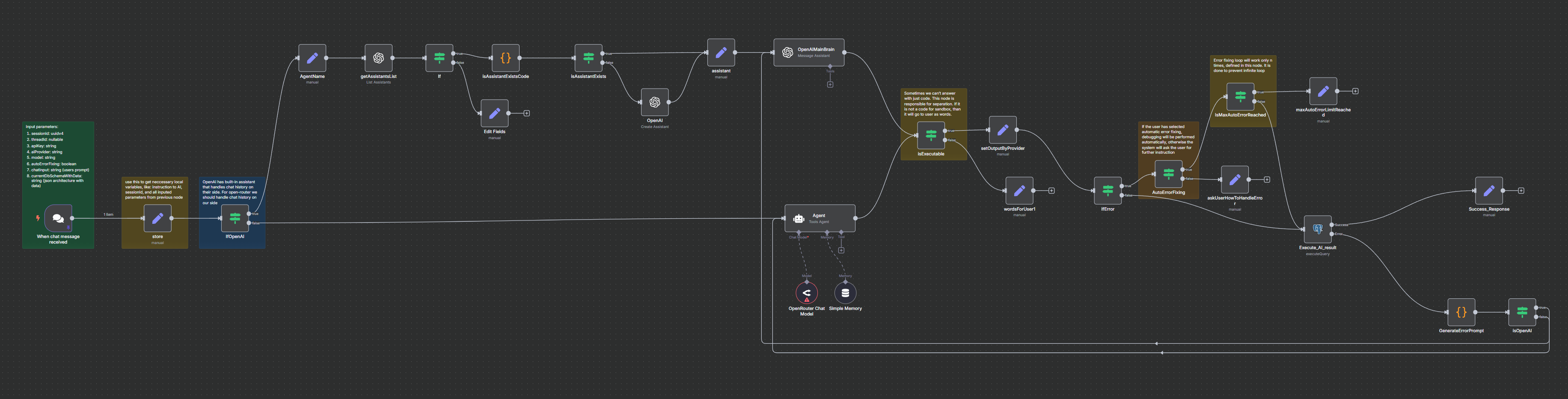

It generated the SQL query and completed the task in three attempts (see the bottom of the image). This means it generated an initial query to solve the task, executed it in a sandbox, encountered an error, fixed it, encountered another error, fixed it again, and finally produced a working SQL query and returned the execution result.

You can review the entire process step by step. By comparing the “Execution Result” with “Your Current DB Tables,” you can verify whether the query behaves as expected. We plan to further automate this verification process to assist users with validation. For more details, please visit

Roadmap page.

And as you can see this method isolates the task and uses only required tables and columns. This was the main purpose of using the sandbox.

Tip: If you didn't get the result you expected (in "current db tables", in mock schema generation, in "Execution result"), spent 2-3 mins to understand, if you see that it differs a lot, just start New Chat and ask again the same prompt.

Another Tip: If you will give a super complex task, even with 50 New chat itterations you can not get the expected result. In this case please decompose task into smaller parts by yourself and give each part separately to QueryVerify. It will require you to build entire final query by yourself, but it will be much faster and you will be sure that each part is working as expected. To understand is it complex or not, just try 3,4 New chat iterations and see if it works.

Instead of Scientific results

We have tested the `How to check if all {pattern}_id foreign columns have indexes or not through all tables` complex prompt with nano models and it was performed successfully in 2 iterations (with 1 error). That means this technique can be used to generate working SQL queries efficiently.